Most AI architectures survive the developer demo but fail the regulator's audit. To close this gap, we must synthesize global governance frameworks with local cloud rigor.

In the regulated world, "building it" is only half the battle. The other half is proving that it’s safe, fair, and compliant. When I look at external frameworks like the AI Architecture Audit methodology, I don't just see a checklist; I see a blueprint for architectural integrity.

In this post, I’ll show you how to map these high-level audit principles directly to Azure-native technical controls. This is how you move from a "Trust me, it's safe" posture to a "Here is the proof" posture.

The Gap: Demo-Ready vs. Regulator-Ready

| Audit Pillar | The Architecture "Demo" | The "Regulator" Reality |

|---|---|---|

| Governance | Informal trust & manual access logs. | Azure Policy 'Deny' & automated guardrails. |

| Data Quality | Raw storage buckets with no metadata. | Microsoft Purview end-to-end lineage. |

| Security | API keys embedded in app config files. | AI Gateway (APIM) with Zero-Trust. |

| Explainability | Opaque LLM outputs with no monitoring. | Responsible AI Dashboards. |

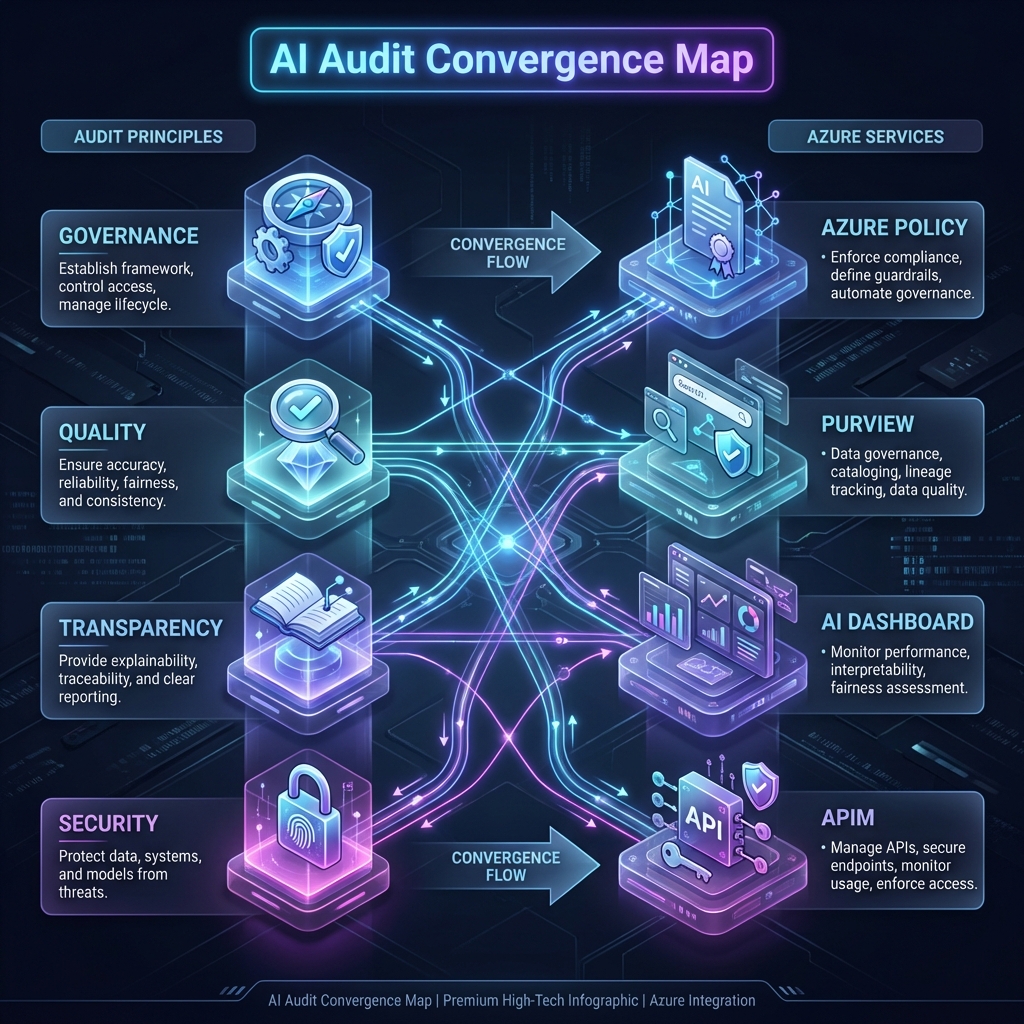

The Convergence Map: Mapping abstract Audit Principles to concrete Azure Service implementations.

1. Governance & Accountability

An audit starts with asking: "Who is responsible if the model hallucinations cause a financial loss?"

The Azure Implementation: We don't just assign roles; we bake them into the infrastructure. By using Entra ID (Active Directory) combined with Azure Policy, we enforce a "Governance-as-Code" model. If a developer tries to deploy an AI model outside of a compliant Landing Zone, the audit engine triggers an immediate block.

2. Data Quality & Integrity

The audit framework asks: "Is the training data clean, lawful, and secured?"

3. Transparency & Explainability

"Can you explain why the AI reached this conclusion?" This is the "Magic Box" problem.

By integrating the Responsible AI Dashboard within Azure Machine Learning, we generate error analysis and feature importance reports. We move the transparency from a PDF manual into a living, real-time dashboard.

From the Post-Mortem Trenches

In my experience, auditors don't ask for a code review; they ask for evidence of consistency. A screenshot of a policy compliance dashboard is worth more than 1,000 pages of technical documentation.

4. Security & The "AI Fortress"

The framework requires "adequate information security." In the cloud, this means Zero Trust.

We implement this via the AI Gateway Pattern. No direct access to LLMs. Everything flows through Azure API Management, which handles the JWT validation, rate limiting, and full-stack logging required for a GRC audit.

Operationalize: The ALZ Assessment Tool

Frameworks are useless without measurements. To move from theory to reality, I recommend the Azure ALZ Modern Assessment Tool.

Azure ALZ Modern Assessment

A comprehensive, web-based tool that evaluates your Azure implementation against official Microsoft FastTrack best practices. It features automated updates for AI Landing Zone checklists and generates executive-ready PowerPoint reports.

5. Financial Governance & FinOps

An often overlooked audit pillar is Cloud Financial Management. If an AI service is running without cost guardrails, it isn't just a budget risk—it's a governance failure.

We implement "Structural FinOps" by leveraging Azure Budgets and Cost Alerts. As recommended in Thomas Maurer's FinOps assessments, we ensure that every AI workload is tagged for accountability, allowing the organization to justify the ROI of its innovation.

Interactive: Assess Your Readiness

Select the controls you have implemented to calculate your current Audit Maturity Score.

Self-Audit Readiness Checklist

Before your next architectural review, ask your team these four critical questions:

-

Is Azure Policy set to 'Deny' for any non-compliant AI service deployments?

-

Does Microsoft Purview have an active data map of your AI training sets?

-

Are you using APIM Policies to log headers for audit traceability?

-

Is your Responsible AI Dashboard updated with the latest model iterations?

-

Have you completed a FinOps Maturity Assessment for your cloud environment?

Official Microsoft Diagnostics

To transition from architectural theory to a regulator-ready production environment, I recommend benchmarking your current state against these official Microsoft diagnostic modules:

AI Readiness Assessment

A strategic evaluation across 7 pillars including Governance, Data Foundations, and Model Management. Ideal for C-suite alignment.

Technical GenAI Assessment

A deep dive into your team's readiness to develop, run, and maintain Generative AI solutions in a production Azure environment.

Master the Sovereign Architecture

Ready to operationalize your Azure journey?

I help organizations turn stalled cloud initiatives into execution engines through the synthesis of GRC rigor and architectural excellence.